A Lightweight Feature Reconstruction Paradigm Fusing Large Kernel Inductive Bias and Orthogonal Spatial Perception

If you're still struggling with the "have it both ways" dilemma under edge computing, then this article might be worth a read.

Abstract

In the design of lightweight convolutional neural networks, how to balance "local receptive field" and "global spatial awareness" under limited FLOPs has always been a core challenge.

Traditional convolutions are limited by the receptive field size, while the conventional SE-Block attention mechanism causes collapse of spatial position information due to global pooling operations. To address this, a novel operator has been developed. This structure innovatively fuses large kernel depthwise convolution and Coordinate Attention, and through forced residual strategy and GroupNorm optimization, successfully builds a feature extraction paradigm that is hardware-friendly and possesses robust position encoding capabilities.

1. Design Motivation and Theoretical Background

Before analyzing the code, we need to understand the three core pain points that this module attempts to solve:

- Limitations of Effective Receptive Field (ERF): Traditional lightweight networks excessively rely on stacking convolutions. According to research, the actual effective receptive field of deep networks often follows a Gaussian distribution and decays with depth, making it difficult to capture large-scale semantic targets.

- Spatial Misalignment: The standard SE module compresses the feature map to via Global Average Pooling. Although it enhances channel dependencies, it completely loses the spatial coordinate information of objects.

- Micro-Batch Instability: During transfer learning or fine-tuning on edge devices, due to memory constraints, batch sizes are often very small (e.g., 2 or 4). At this point, the batch statistics estimation of BatchNorm will produce large deviations, leading to training divergence.

The attention-fused convolution kernel constructed here is precisely a solution proposed based on the above theoretical background.

2. Core Architecture Breakdown

This module is not a simple hierarchical stacking, but a carefully designed feature reconstruction closed loop. Below, we deeply analyze it step by step with code logic:

2.1 Inductive Bias from Large Kernel Depthwise Convolution

Code implementation:

self.dw_conv = nn.Conv2d(c1, c1, kernel_size=5, stride=s, padding=2, groups=c1, bias=False)

Design: The kernel size is increased from to . From an information theory perspective, this increases the "visual field" of a single neuron.

Theoretical advantage: The receptive field area of a convolution is times that of . In lightweight networks (such as MobileNetV3), this large kernel depthwise convolution can effectively simulate the Token Mixer behavior in Transformers, enhancing the ability to capture texture and shape. Moreover, inference frameworks like NCNN already provide high Winograd algorithm optimization support for the DW operator.

2.2 Orthogonal Feature Decomposition and Coordinate Attention

This is the "soul" of this module. Unlike SE's global pooling, this module uses two orthogonal 1D Global Pooling operations to decompose spatial information.

Step I: Orthogonal Projection

x_h = self.pool_h(feat) # Output: (N, C, H, 1)

x_w = self.pool_w(feat) # Output: (N, C, 1, W)

- Mathematical representation: The input tensor is aggregated along the horizontal coordinate and vertical coordinate respectively. This operation generates two direction-aware feature maps, enabling the network to capture long-range dependencies along one spatial direction while preserving precise position information in the other direction.

Step II: Cross-Dimensional Interaction and Dimensionality Reduction

y = torch.cat([x_h, x_w], dim=2)

y = self.conv_pool(y)

y = self.gn(y) # GroupNorm for stability

Optimization strategy: A bottleneck layer with

reduction=16is introduced here to reduce model complexity.Improvement: The introduction of GroupNorm is the finishing touch. In the middle layer of the attention branch, feature channels are compressed, and often accompanied by very small batch sizes. GN normalizes by grouping channels, and its statistics do not depend on batch size, thus solving the "statistics drift" problem caused by BN layers in fine-tuning tasks.

Step III: Attention Recalibration

a_h = self.conv_h(x_h).sigmoid()

a_w = self.conv_w(x_w).sigmoid()

out = identity_feat * a_w * a_h

- Feature fusion: The final output feature map is obtained by performing Hadamard Product on the original features and the attention maps from both directions. This is equivalent to assigning an "importance weight" calculated based on global context to each pixel on the feature map.

2.3 Forced Residual Stream

if self.use_res:

return x + out

- Gradient flow protection: The attention mechanism is essentially a "Soft Gating". At the early stage of training, attention weights may be close to zero. The forced residual connection builds an identity mapping path, ensuring that in the worst case (attention layer failure), the module degrades into a standard convolutional layer, thus guaranteeing effective backpropagation of gradients in deep networks and avoiding gradient vanishing.

3. Detailed Execution Flow and Tensor Evolution

To more clearly demonstrate the data flow inside this module, we formalize the forward process into the following detailed steps:

- Spatial Feature Extraction:

- Input .

- Through DWConv PWConv BN Hardswish.

- Output intermediate feature .

Coordinate Information Encoding:

- H-Pooling: Compress to .

- W-Pooling: Compress to .

Transformation and Activation:

- Concatenate and and reduce dimensionality to via convolution.

- Apply GroupNorm(1, mip) for normalization (here Group=1 is equivalent to LayerNorm, but along the channel dimension).

- Apply Non-linear activation function.

- Decoding and Re-weighting:

- Split the feature tensor back into spatially-aware weight vectors and .

- (where denotes element-wise multiplication under broadcasting).

- Feature Reconstruction:

- Final output (if residual condition is satisfied).

4. Experimental Verification and Data Visualization

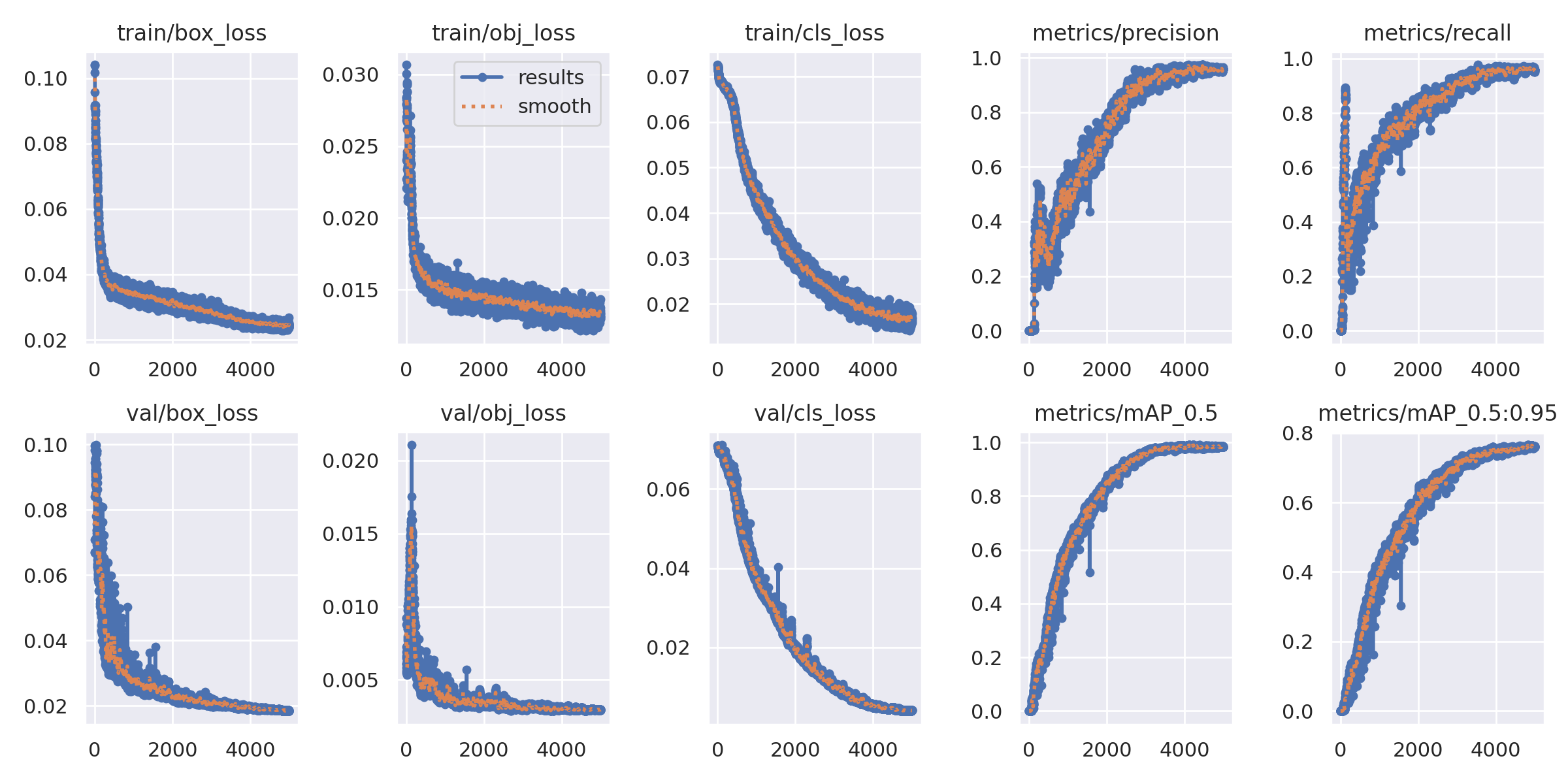

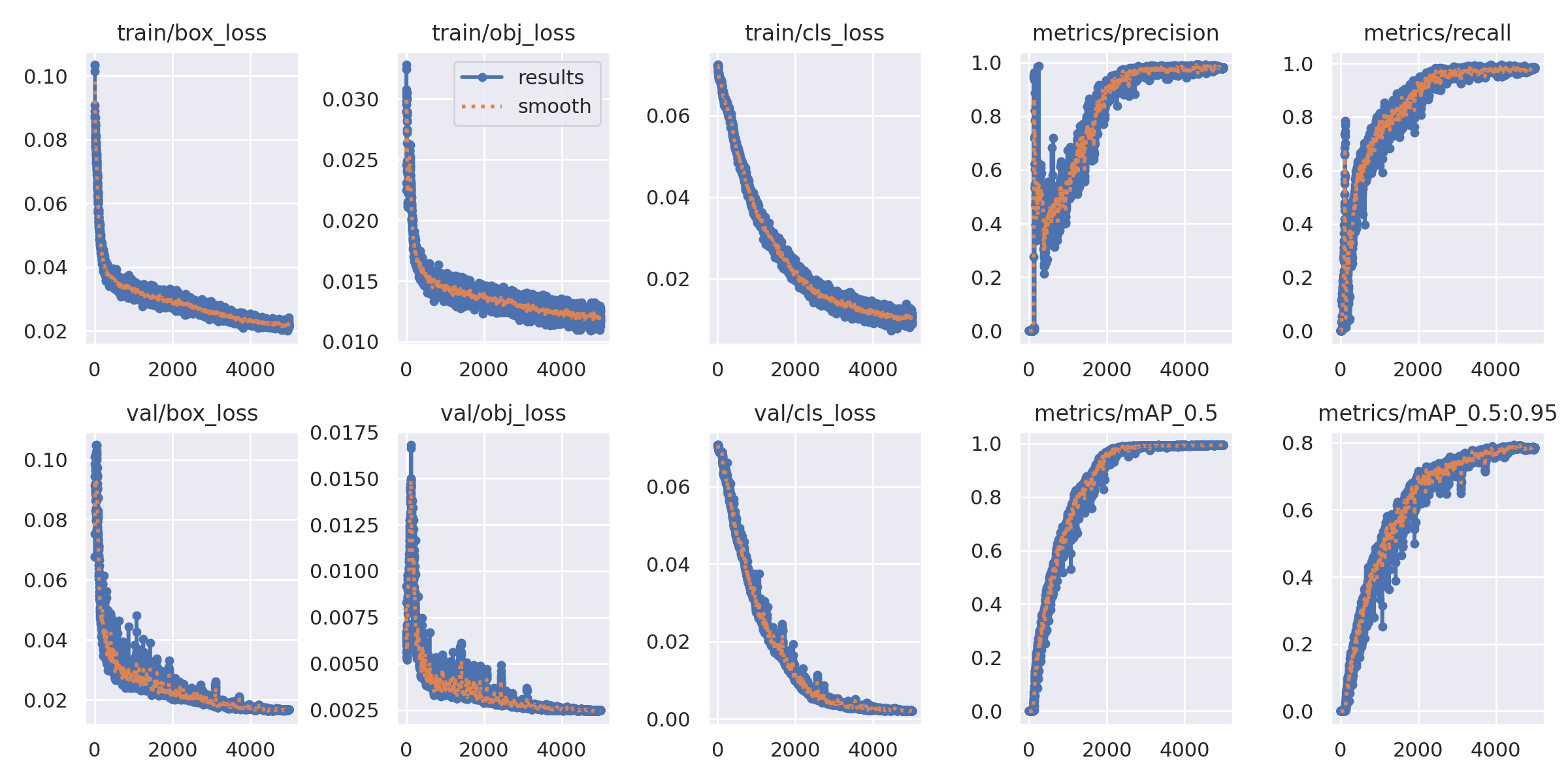

To verify the effectiveness of this convolution kernel in real-world scenarios, we conducted rigorous comparative experiments in a controlled environment.

Experimental Setup:

- Dataset: Custom detection dataset (including categories with high similarity such as batons, flashlights, knives).

- Training strategy: SGD optimizer, Cosine LR schedule, training for 5000 epochs (to ensure full convergence).

Baseline: Only replace this module with a standard 3x3 DWConv, while keeping the rest of the network architecture exactly the same.

4.1 Overall Performance Evaluation: Trade-off Analysis between Computation and Accuracy

COMMON

ENHANCE

This proves that the module does not trade performance for simple parameter stacking, but enhances the model's representation capability through more efficient spatial feature modeling.

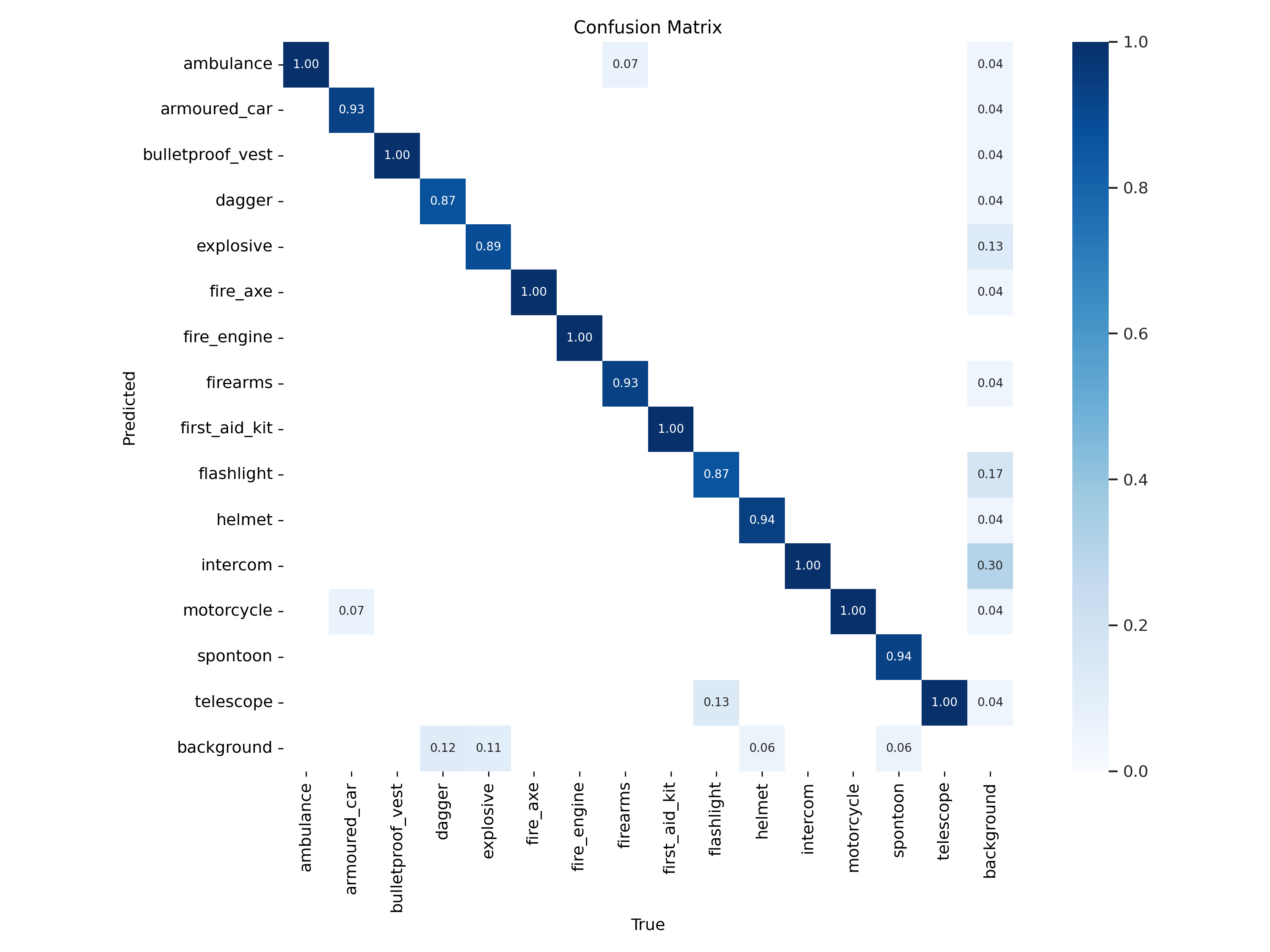

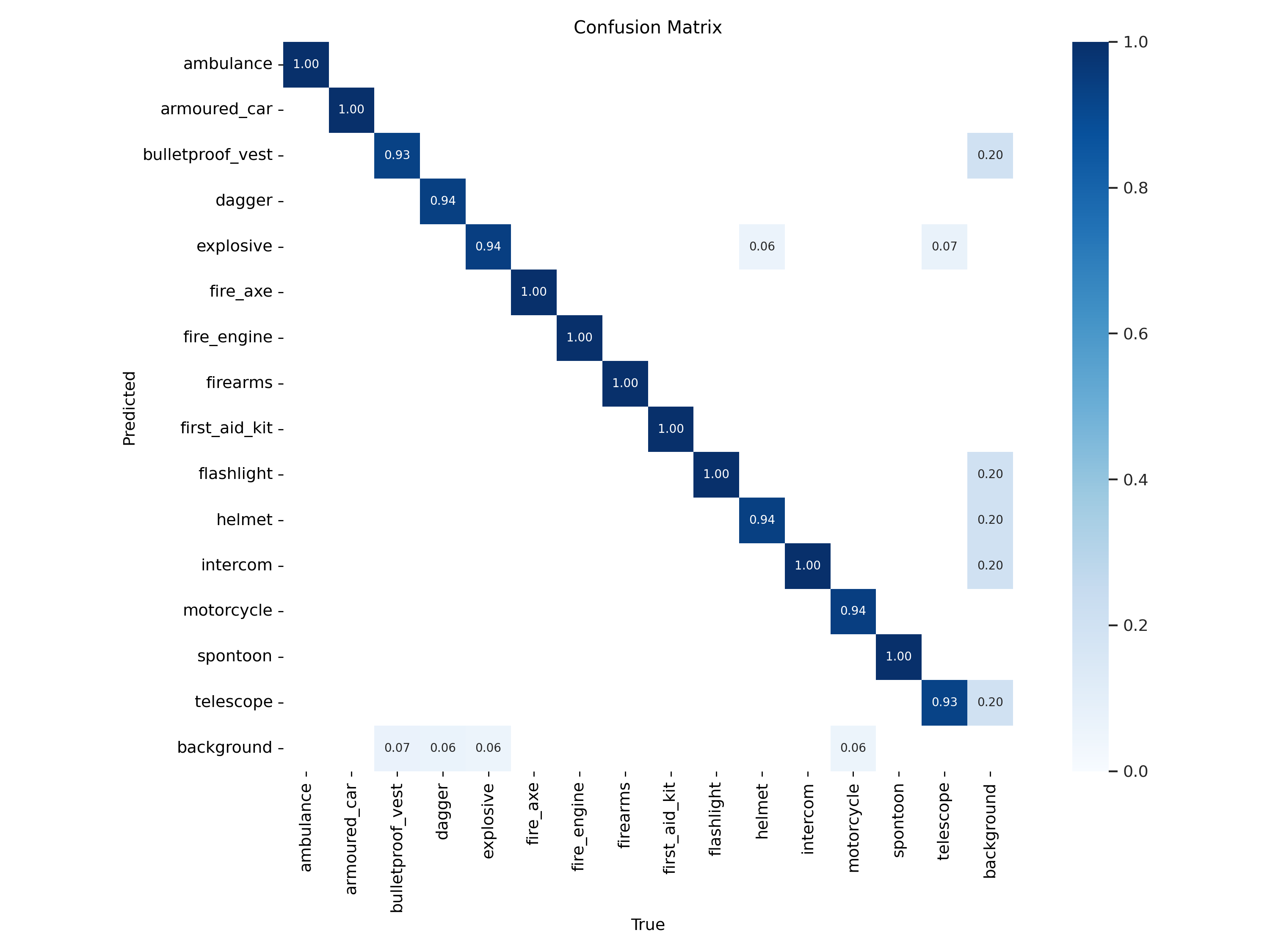

4.2 Hard Example Mining and Fine-grained Classification

In testing, "inter-class similarity" is the biggest challenge.

For example, a long "baton" and a "flashlight" are extremely difficult to distinguish under low resolution.

We extracted the Top-1 accuracy of the model on these specific categories for comparative analysis:

COMMON

ENHANCE

5. Conclusion

This operator demonstrates a highly forward-looking lightweight network design approach.

- By using convolution, it introduces stronger spatial inductive bias.

- By using Coordinate Attention, it solves the problem of standard CNNs lacking position awareness.

- By using GroupNorm and Hardswish, it demonstrates excellent engineering awareness, making it highly practical in few-shot fine-tuning and edge inference scenarios.

This module is not only a plug-and-play component, but also provides a standard spatial-channel decoupling paradigm for future lightweight detection network design.